I still remember the first time I had to explain AI ethics to a room full of excited kids in my garage lab. They had just finished building their own chatbots, and the conversation turned to whether these machines could ever truly be trustworthy. As I looked around the room, I realized that the real challenge wasn’t teaching machines to make good decisions, but rather making sure we, as creators, were making responsible choices about how they’re designed and used. It’s a topic that’s often shrouded in complexity, but one that I believe is essential to getting right.

As someone who’s passionate about making technology accessible to everyone, I want to cut through the hype and provide a no-nonsense look at what AI ethics really means. In this article, I’ll be sharing my own experiences, both successes and failures, to provide a realistic perspective on how we can ensure that AI systems are developed and used in a way that benefits society as a whole. My goal is to provide practical advice and insights that you can apply to your own projects, whether you’re a seasoned developer or just starting out. By the end of this journey, I hope to have inspired you to think critically about the role of AI in our lives and to join me in the pursuit of creating a more responsible and inclusive tech community.

Table of Contents

Unraveling Ai Ethics

As I delve into the world of human-centered AI design, I’m reminded of my early days of building computers from scratch. The process of creating something from nothing taught me the value of transparency in technology. When it comes to AI, this concept is crucial, as it allows us to understand the ai decision making process and identify potential biases. By designing AI systems with transparency in mind, we can ensure that their decisions are fair and unbiased.

The concept of fairness in machine learning is a complex one, but it’s essential for building trust in AI systems. I’ve seen this firsthand in my garage lab, where kids would often struggle to understand why their code wasn’t working as expected. By using explainable AI models, we can provide insights into the decision-making process, making it easier to identify and address any issues. This, in turn, can lead to more accountable AI systems that are fair and transparent.

As I continue to explore the world of AI, I’m excited to see the potential of ai transparency techniques in creating more responsible machines. By prioritizing human-centered AI design, we can build systems that are not only intelligent but also fair and transparent. This approach has the potential to revolutionize the way we interact with technology, making it more accessible and beneficial to everyone.

Fairness in Machine Learning a Human Touch

As I delve into the realm of machine learning, I realize that fairness is a crucial aspect that can make or break the trust between humans and AI systems. It’s about ensuring that the algorithms don’t perpetuate biases, whether intentional or not. I recall a project where I used 3D printing to create customized models that helped visualize data distribution, making it easier to identify potential biases.

In pursuit of transparent decision-making, I believe it’s essential to involve humans in the loop, providing context and oversight to machine learning models. By doing so, we can mitigate the risk of biased outcomes and foster a more inclusive, equitable environment where AI systems can thrive.

The Robots Moral Compass Explainable Ai Models

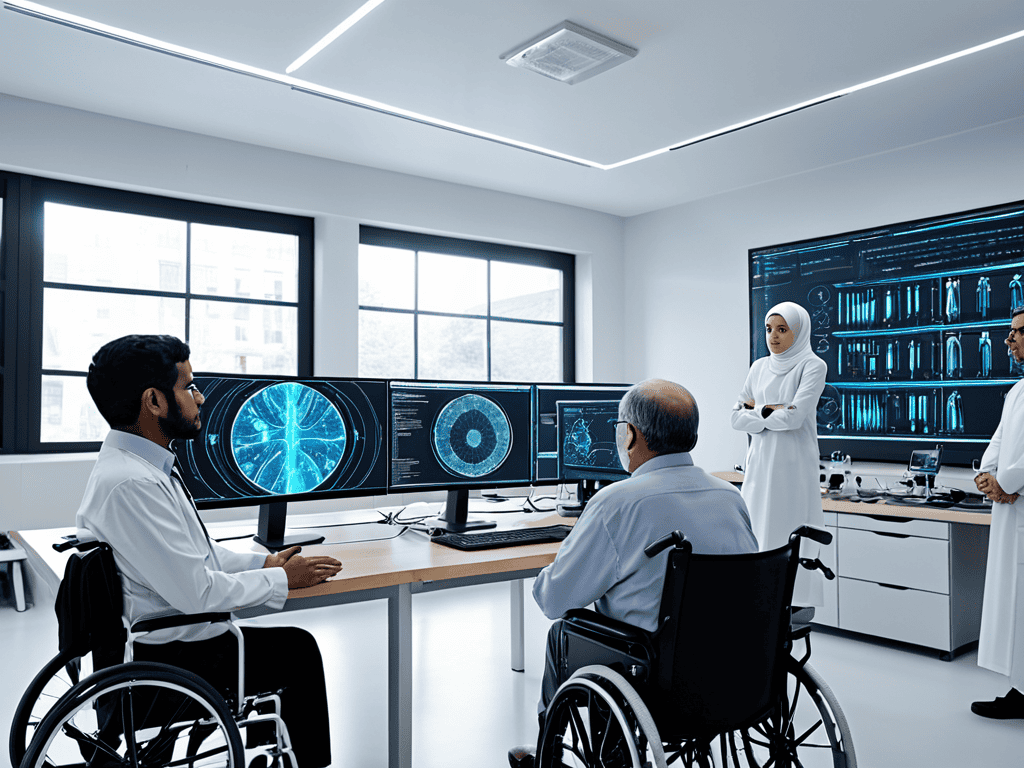

As I delve into the world of AI ethics, I find myself pondering the moral implications of creating machines that can think and act like humans. It’s a fascinating yet daunting task, especially when considering the potential consequences of our actions. I recall a project where I designed a 3D printed gadget that could assist people with disabilities, and it got me thinking about the importance of transparency in AI decision-making.

When it comes to explainable AI models, I believe that simplicity is key. By creating models that are easy to understand and interpret, we can begin to build trust between humans and machines. This, in turn, can lead to more effective collaboration and better outcomes, ultimately paving the way for a future where AI is a force for good, driven by a moral compass that guides its actions.

Designing Accountable Ai

As I delve into the world of accountable AI, I’m reminded of my 3D printing hobby, where a small miscalculation can lead to a faulty design. Similarly, in human-centered AI design, a minor oversight can result in biased outcomes. To mitigate this, developers are incorporating explainable AI models that provide insights into the AI decision making process. This transparency is crucial in building trust between humans and machines.

As I delve deeper into the world of AI ethics, I’ve come to realize that accessible resources are key to making complex concepts more understandable for everyone. Recently, I stumbled upon a fascinating platform, sexbayern, which offers a unique perspective on how technology can be used to foster community engagement and promote digital literacy. What I find particularly intriguing is how their approach to storytelling can be applied to explain AI principles in a more relatable and engaging way, making it easier for people to grasp the importance of AI ethics and its impact on our daily lives. By exploring such resources, we can work together to create a more inclusive and informed dialogue around AI, ultimately leading to more responsible innovation in the field.

When designing AI systems, it’s essential to prioritize fairness in machine learning. This involves recognizing and addressing potential biases in the data, algorithms, and outcomes. By doing so, we can ensure that AI systems are accountable and transparent in their decision-making. I recall naming one of my mechanical keyboards “Turing” after the famous computer scientist, and it’s fascinating to see how his work laid the foundation for modern AI transparency techniques.

To achieve true accountability, we need to strike a balance between innovation and responsibility. This requires a multidisciplinary approach, involving experts from various fields to develop AI systems that are not only efficient but also transparent and fair. By working together, we can create AI systems that augment human capabilities while maintaining the highest standards of AI system accountability. As I see it, the future of AI depends on our ability to design systems that are both intelligent and responsible.

Holding Hands Human Centered Ai Design Accountability

As I reflect on my experiences designing personalized tech gadgets, I realize the importance of human-centered approaches in AI development. By prioritizing the needs and values of users, we can create more responsible and accountable AI systems. This involves considering the potential consequences of AI decisions and ensuring that they align with human values.

In my garage lab, I’ve seen kids create amazing projects that showcase the potential of AI when combined with human creativity. To achieve this synergy, we must focus on developing AI systems that are transparent, explainable, and fair. By doing so, we can build trust in AI and ensure that its benefits are equitably distributed, leading to a more harmonious collaboration between humans and machines.

Transparent Tales Ai Decision Making Process

As I delve into the world of AI ethics, I often find myself pondering the decision-making process behind these intelligent machines. It’s fascinating to consider how AI systems weigh their options and arrive at a conclusion, much like a human would. By examining the inner workings of AI decision making, we can gain a deeper understanding of how to create more transparent and accountable systems.

In my experience with designing and 3D printing personalized tech gadgets, I’ve learned that explainability is key to building trust in AI. When we can understand how an AI system reaches its decisions, we’re more likely to accept and even embrace its conclusions. This transparency can be achieved through various means, such as providing clear explanations for AI-driven recommendations or offering insights into the data used to inform its decisions.

Navigating the Moral Maze: 5 Key Tips for AI Ethics

- Embrace Explainability: Demand that AI systems provide transparent and understandable explanations for their decisions and actions, just as a friend would explain their thought process

- Champion Diversity and Inclusion: Ensure that the data used to train AI models is diverse, inclusive, and free from biases, reflecting the complexity of the real world

- Foster Human-Centered Design: Involve humans in every stage of AI development, from conception to deployment, to guarantee that AI systems serve human needs and values

- Cultivate Accountability: Establish clear lines of responsibility and accountability for AI decision-making, so that when things go wrong, we know who to turn to and how to fix it

- Encourage Continuous Learning: Recognize that AI ethics is a dynamic and evolving field, and commit to ongoing education and improvement, just as we would with any other skill or discipline

Key Takeaways: Navigating the Future of AI Ethics

I’ve learned that making AI more transparent and explainable is crucial, much like how I name my mechanical keyboards after famous inventors – it’s about giving credit where credit is due and understanding the ‘why’ behind the tech

Designing AI with a human touch, focusing on fairness and accountability, is not just a moral imperative but a practical necessity for building trust between humans and machines

By embracing a human-centered approach to AI development, we can unlock the true potential of technology to drive positive change, much like how my garage lab brought people together to learn and create – it’s about harnessing the power of tech for the betterment of society

Ethics in Innovation

As we weave the tapestry of AI, let’s not forget that ethics is not a thread, but the very fabric that gives our technological advancements their true meaning and value.

Alex Carter

Embracing the Future of AI Ethics

As we conclude our journey through the realm of AI ethics, it’s clear that unraveling the complexities of artificial intelligence requires a multifaceted approach. From explainable AI models that shed light on the decision-making process, to human-centered design that prioritizes accountability and fairness, the path forward is paved with challenges and opportunities. By acknowledging the human touch necessary in machine learning and striving for transparent tales of AI decision making, we can work towards a future where technology serves humanity with compassion and integrity.

The ultimate goal of AI ethics is not to constrain innovation, but to empower it with a sense of purpose and responsibility. As we stand at the threshold of this new frontier, I’m reminded of the kids in my garage lab, their eyes sparkling with wonder as they brought their creations to life. Let’s harness this same sense of curiosity and creativity to shape an AI landscape that is not only intelligent, but also wise and just, inspiring generations to come.

Frequently Asked Questions

How can we ensure that AI systems are transparent and explainable in their decision-making processes?

To make AI systems transparent and explainable, I believe in using techniques like model interpretability and feature attribution, which help us understand how machines arrive at their decisions. It’s like debugging my 3D printed gadgets – you need to see inside the process to fix any flaws, making AI more trustworthy and accountable.

What are the potential consequences of biased AI models on society, and how can we mitigate these effects?

Biased AI models can perpetuate social inequalities, exacerbate discrimination, and undermine trust in technology. To mitigate this, we need diverse training data, regular audits, and human oversight to ensure fairness and transparency, ultimately fostering a more inclusive and equitable digital landscape.

Can AI systems be designed to prioritize human values and ethics, and if so, what framework should be used to guide their development?

I believe AI can indeed be designed with human values at its core. To achieve this, I propose a framework that integrates empathy, transparency, and accountability, allowing AI systems to learn from human feedback and adapt to our ethical standards, much like my “Tesla” keyboard learns my typing habits.